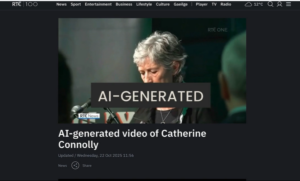

A video that discusses how Artificial Intelligence is impacting the way disinformation is created and spread to manipulate public opinion. It also outlines importance of Media Literacy education and regulatory measures, referencing Ireland’s Online Safety and Media Regulation Act and the EU’s Digital Services Act.

Sort by